You track your web applications. You inventory your APIs. But is anybody monitoring your AI servers?

Just last week research found that there were more than 175,000 exposed versions of Ollama, an AI server popular for self-hosting LLMs.

Across enterprises, self-hosted model servers are being deployed on cloud VMs and GPU-backed instances to power copilots, internal automation, and experimental AI features. They are fast to spin up and just as easy to expose, yet they are rarely treated as first-class attack surface assets.

If one of those servers is publicly reachable without proper authentication, it is an exposed service.

Traditional vulnerability management workflows may not flag these systems because they are not categorized as web applications or APIs. They sit in a gray area, neither infrastructure-as-usual nor formally productionized.

That gray area is precisely where attackers look.

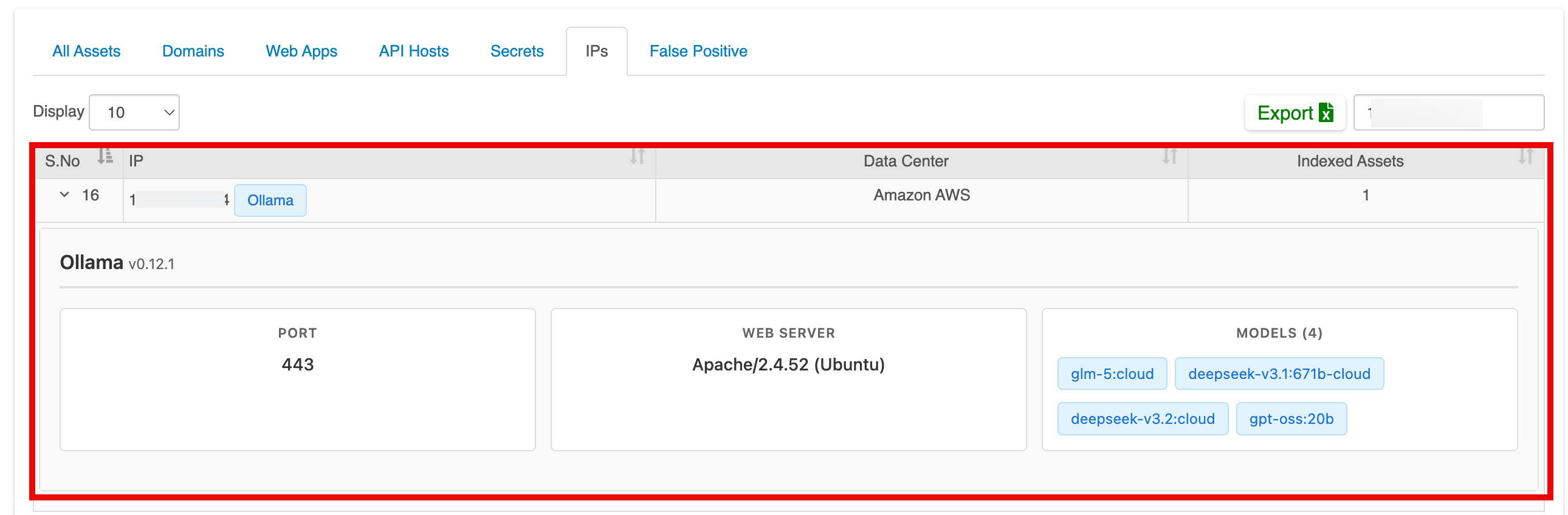

With this release, Indusface WAS introduces AI Server Detection, a capability that brings AI model servers into your attack surface visibility framework. The goal is simple: if an AI server is publicly accessible across your discovered IP space, you should not be the last to know.

The AI Exposure Blind Spot

Across organizations, AI model servers are being deployed in ways that bypass traditional security onboarding. A developer provisions a GPU-backed virtual machine to test a large language model. Another team launches a model server to power an internal chatbot. A proof-of-concept quietly becomes semi-permanent. Over time, these instances accumulate.

They are rarely added to centralized asset inventories. They may not pass through formal change management. Authentication controls are often weak, inconsistently configured, or absent altogether.

These servers listen on network ports. If those ports are reachable from the internet, the services become discoverable through routine scanning. For example, Ollama typically exposes its API on port 11434 by default. However, if the service is deployed behind a web server or reverse proxy, it may also be accessible through standard web ports such as 80 (HTTP) or 443 (HTTPS). If any of these ports are publicly accessible, the model server becomes discoverable and effectively part of the organization’s external attack surface, regardless of whether the deployment was intended to be internal.

In addition, AI environments are deployed quickly to meet business demand. They may reside in loosely governed cloud accounts. Network security groups may be broadly permissive during testing and never tightened. “Internal-only” assumptions are made without validating external reachability.

Meanwhile, internet-wide scanning is constant. Publicly reachable services are enumerated and fingerprinted at scale. An exposed AI server can reveal version identifiers, service metadata, and hosted model information. In some cases, inference endpoints can be queried directly.

AI servers consume significant compute resources, host operational models, and may integrate with proprietary data sources. Exposure can lead to infrastructure abuse, escalating costs, and unintended data disclosure.

By the time abnormal usage appears in logs, exposure has already happened.

The Security Impact of Exposed AI Servers

Exposed AI infrastructure introduces risks that extend beyond traditional service misconfiguration.

- First, there is compute abuse. Large language model inference is resource-intensive. Unauthorized access can lead to GPU exhaustion, degraded performance for legitimate users, and unexpected infrastructure costs. Abuse does not require sophisticated exploitation. It only requires accessibility.

- Second, there is a reconnaissance value. AI model servers often expose version identifiers and service metadata. This information enables attackers to understand what technologies you are running and whether known vulnerabilities might apply.

- Third, there is the risk of indirect data exposure. Many AI systems are connected to internal knowledge repositories or fine-tuned on proprietary datasets. If an exposed endpoint allows structured queries, even partial responses can reveal internal context.

- Finally, there is a broader issue of unmanaged attack surface expansion. Every externally reachable service increases complexity. AI infrastructure should not exist outside your visibility model.

Introducing AI Server Detection in Indusface WAS

This feature identifies publicly accessible AI model servers across your discovered IP ranges during the standard discovery workflow. AI server identification is integrated directly into the existing discovery process and relies on lightweight fingerprinting techniques that safely analyze exposed services without interfering with production workloads.

AI Server Detection operates within the passive discovery mechanisms already embedded in Indusface WAS. As your external assets are mapped and analyzed, AI model server fingerprints are identified where present.

If a publicly accessible AI server is detected, contextual information is surfaced, including service presence, version indicators where available, open ports, and authentication posture insights. This transforms AI infrastructure from an unknown or assumed asset into a visible and manageable component of your attack surface.

By embedding detection into routine asset discovery, AI infrastructure becomes part of your normal security cadence.

For more information about AI Server Detection in Indusface WAS and how it can enhance your asset visibility, connect with the Indusface team.

Stay tuned for more relevant and interesting security articles. Follow Indusface on Facebook, Twitter, and LinkedIn.